MIT has taken offline a massive and highly-cited dataset that trained AI systems to use racist and misogynistic terms to describe people, The Register reports.

The training set — called 80 Million Tiny Images, as that’s how many labeled images it’s scraped from Google Images — was created in 2008 to develop advanced object detection techniques. It has been used to teach machine-learning models to identify the people and objects in still images.

As The Register’s Katyanna Quach wrote: “Thanks to MIT‘s cavalier approach when assembling its training set, though, these systems may also label women as whores or bitches, and Black and Asian people with derogatory language. The database also contained close-up pictures of female genitalia labeled with the C-word.”

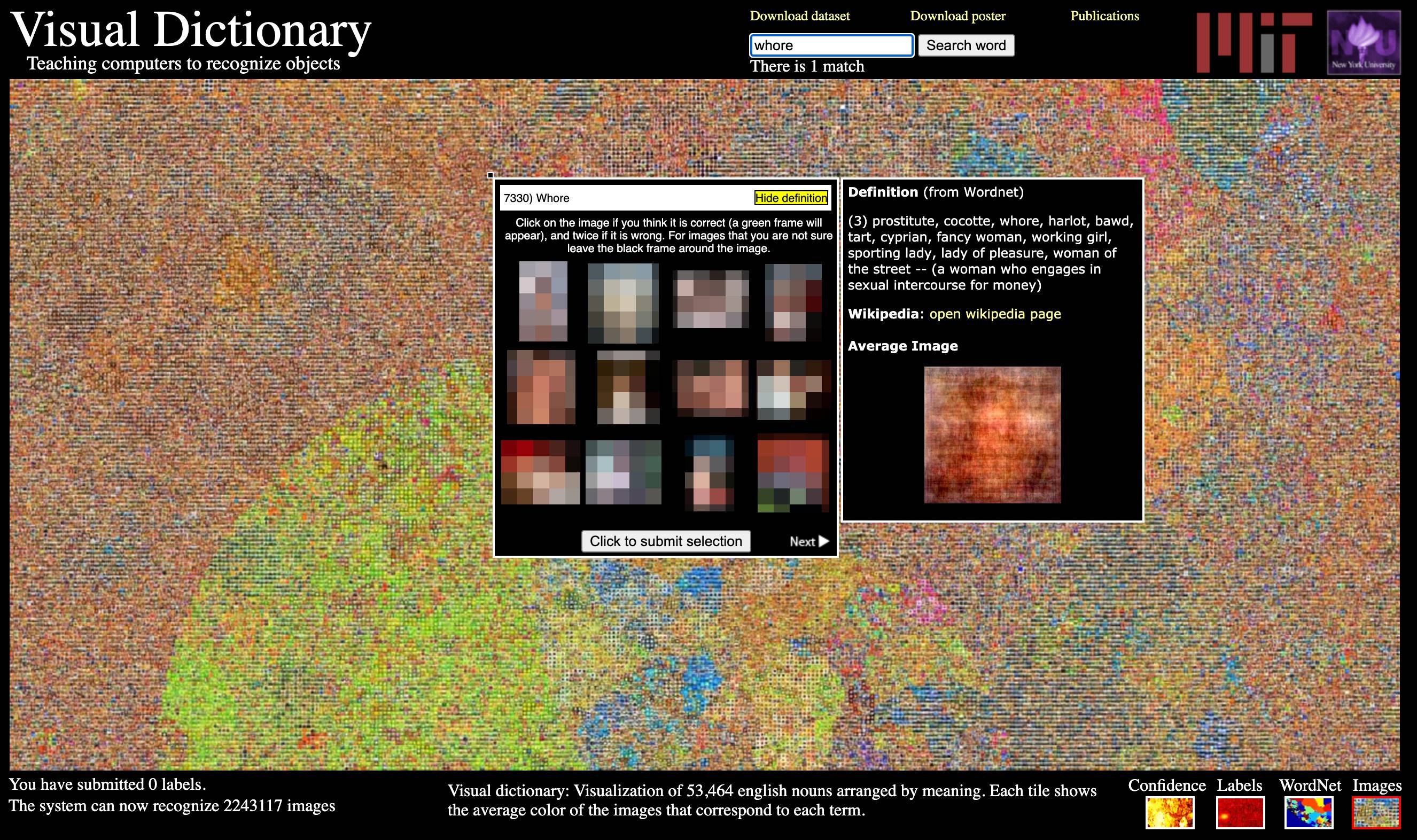

The Register managed to get a screenshot of the dataset before it was taken offline:

80 Million Tiny Images’ “serious ethical shortcomings” were discovered by Vinay Prabhu, chief scientist at privacy startup UnifyID, and Abeba Birhane, a PhD candidate at University College Dublin. They revealed their findings in the paper Large image datasets: A pyrrhic win for computer vision?, which is currently under peer review for the 2021 Workshop on Applications of Computer Vision conference.

This damage of such ethically dubious datasets reaches far beyond bad taste; the dataset has been fed into neural networks, teaching them to associate image with words. This means any AI model that uses the dataset is learning racism and sexism, which could result in sexist or racist chatbots, racially-biased software, and worse. Earlier this year, Robert Williams was wrongfully arrested in Detroit after a facial recognition system mistook him for another Black man. Garbage in, garbage out.

As Quach wrote: “The key problem is that the dataset includes, for example, pictures of Black people and monkeys labeled with the N-word; women in bikinis, or holding their children, labeled whores; parts of the anatomy labeled with crude terms; and so on – needlessly linking everyday imagery to slurs and offensive language, and baking prejudice and bias into future AI models.”

After The Register alerted the university about the training set this week, MIT removed the dataset, and urged researchers and developers to stop using the training library, and delete all copies of it. They also published an official statement and apology on their site:

It has been brought to our attention that the Tiny Images dataset contains some derogatory terms as categories and offensive images. This was a consequence of the automated data collection procedure that relied on nouns from WordNet. We are greatly concerned by this and apologize to those who may have been affected.

The dataset is too large (80 million images) and the images are so small (32 x 32 pixels) that it can be difficult for people to visually recognize its content. Therefore, manual inspection, even if feasible, will not guarantee that offensive images can be completely removed.

We therefore have decided to formally withdraw the dataset. It has been taken offline and it will not be put back online. We ask the community to refrain from using it in future and also delete any existing copies of the dataset that may have been downloaded.

Examples of AI showing racial and gender bias and discrimination are numerous. As TNW’s Tristan Greene wrote last week: “All AI is racist. Most people just don’t notice it unless it’s blatant and obvious.”

“But AI isn’t a racist being like a person. It doesn’t deserve the benefit of the doubt, it deserves rigorous and constant investigation. When it recommends higher prison sentences for Black males than whites, or when it can’t tell the difference between two completely different Black men it demonstrates that AI systems are racist,” Greene wrote. “And, yet, we still use these systems.”

Source: TheNextWeb